A fundamental change has occurred in cybersecurity. Adversarial AI agents are probing financial infrastructure at machine speed. We built an autonomous agent to match them.

The security community has begun documenting what many teams have suspected for months: AI-driven attack campaigns are targeting financial infrastructure at scale. Anthropic recently disclosed the first documented AI-orchestrated cyber espionage campaign. Project Glasswing brought together twelve major technology and financial institutions after a frontier model identified thousands of zero-day vulnerabilities across every major operating system and browser, confirming that AI offensive capabilities have surpassed all but the most skilled human operators. U.S. Cyber Command has warned explicitly that financial institutions are a primary target as geopolitical tensions escalate. These are not theoretical risks. They are structured, adaptive campaigns that enumerate endpoints, chain vulnerabilities, and move too fast and too systematically to be human-operated.

We see this in our own telemetry. Rogo serves the largest financial institutions in the world, and our security team continuously monitors and blocks for exactly these patterns: rapid endpoint enumeration, automated vulnerability chaining, request signatures that fingerprint as machine-generated. The activity is real, it is ongoing, and it is targeting every company in this space. We believe that these patterns are serious enough to shape our cybersecurity defense strategy.

Initially, we ran four manual penetration tests a year when most firms run one. A team of consultants would spend two weeks probing our application, produce a PDF, and move on. That's roughly 40 business days of testing out of 365. However, our reality as a hypergrowth AI startup complicates the strategy. We deploy multiple times per day. The time-to-exploit for critical CVEs is often under 24 hours. Most teams are uncovered for 325 days a year and don't know it.

The math didn't look pretty, and from first principles, it seemed like AI is making it worse. A static testing cadence against a hyper dynamic threat landscape that moves daily cannot be the foundation of a modern AI-native security program. Rather, it's a paper trail and a compliance tick box.

Rogo uses AI throughout the entire company. Security is no exception.

Introducing Sisyphus

We call it Sisyphus. The boulder rolls back down every time you deploy. The question is whether you push it back up four times a year or every day. The name reflects the infinite reality of security: it is a consistent, persistent goal to reinforce and build. There is no "done."

Sisyphus is an autonomous security agent that runs continuous offensive testing against our own infrastructure. It pen tests us once or twice a day, calibrated to deployment cadence, so that every code change is validated before the window of exposure opens. It doesn't replace human security researchers. It extends them from periodic engagements to persistent coverage.

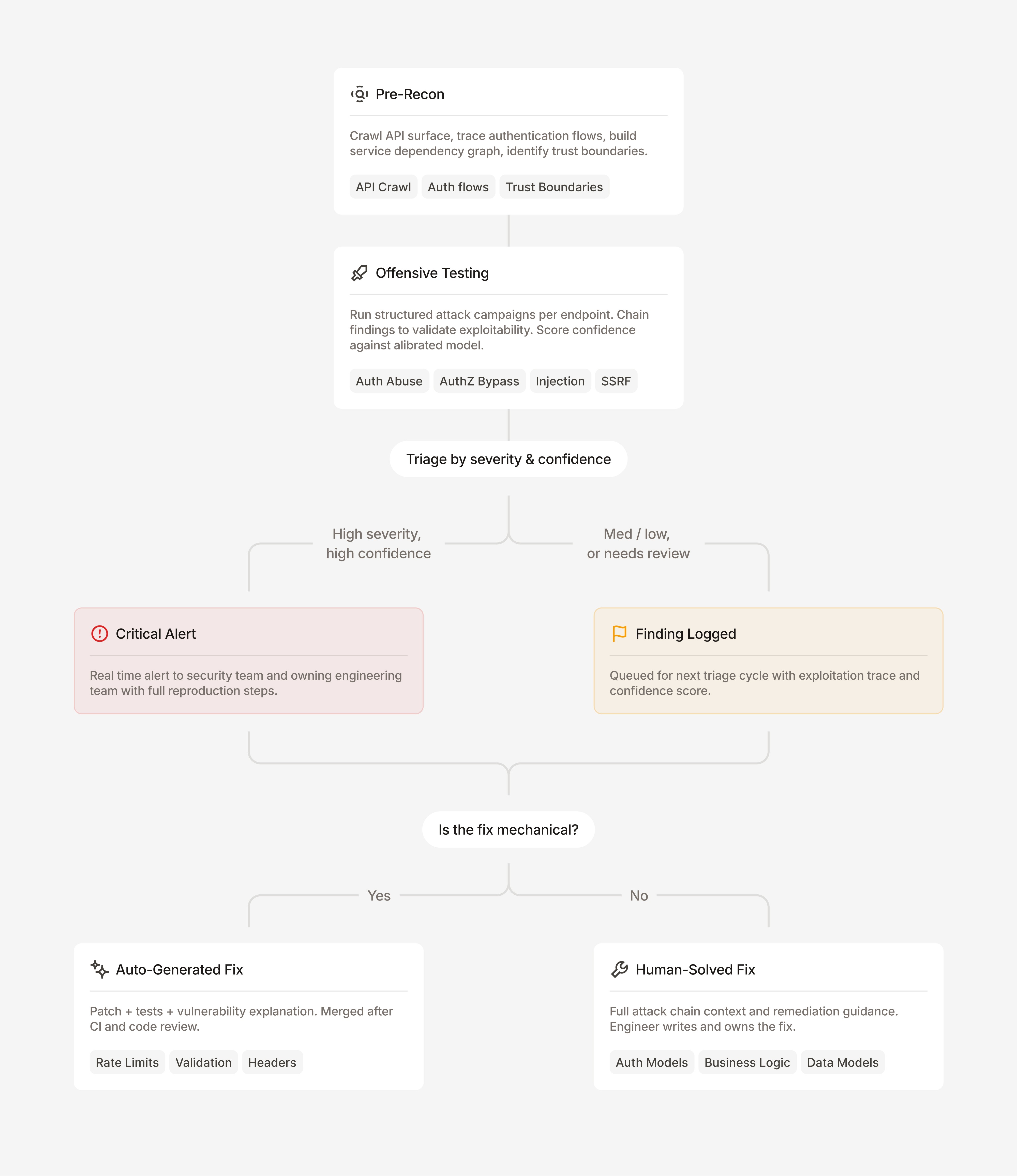

The system works in three phases: pre-recon, offensive testing, and automated remediation.

How Sisyphus maps the terrain before attacking

Before Sisyphus sends a single payload, it builds a model of the attack surface. It crawls our API endpoints, traces authentication flows, and maps trust boundaries between services.

This recon layer updates continuously as new endpoints deploy. The attack surface model is never stale. That turned out to matter more than we expected (more on this below).

How autonomous offensive testing works

Sisyphus runs structured attack campaigns across categories: authentication abuse, authorization bypass, injection, SSRF, LLM exploits, and others. Each campaign is informed by the recon phase and tailored to the specific endpoint under test.

The agent doesn't just check boxes. When it finds a potential authentication bypass, it chains that finding with other weaknesses to validate exploitability. A medium-confidence OAuth flow issue becomes a high-confidence session hijack when the agent demonstrates it can actually replay tokens against a live callback endpoint.

What happens when Sisyphus finds something

When Sisyphus confirms an exploitable finding, two things happen at once.

First, critical alerts fire immediately for high-confidence, high-severity issues. These go directly to the security team and the owning engineering team with full reproduction steps. Think Wiz-style alerts, but generated from actual exploitation rather than static analysis.

Second, the system generates a fix. The decision between full automation and human review comes down to one question: does the fix require understanding business intent and context?

For mechanical fixes (missing rate limiting, input validation gaps, header misconfigurations), Sisyphus opens a PR with the patch, tests, and a detailed explanation of the vulnerability. These run through CI checks and queue for a final human review before merging.

For complex fixes (i.e. authorization model changes, authentication flow redesigns, business logic corrections), Sisyphus opens a ticket with a proposed approach, flags it for human review, and links the full exploitation chain so the reviewer has complete context.

What proactive testing actually looks like

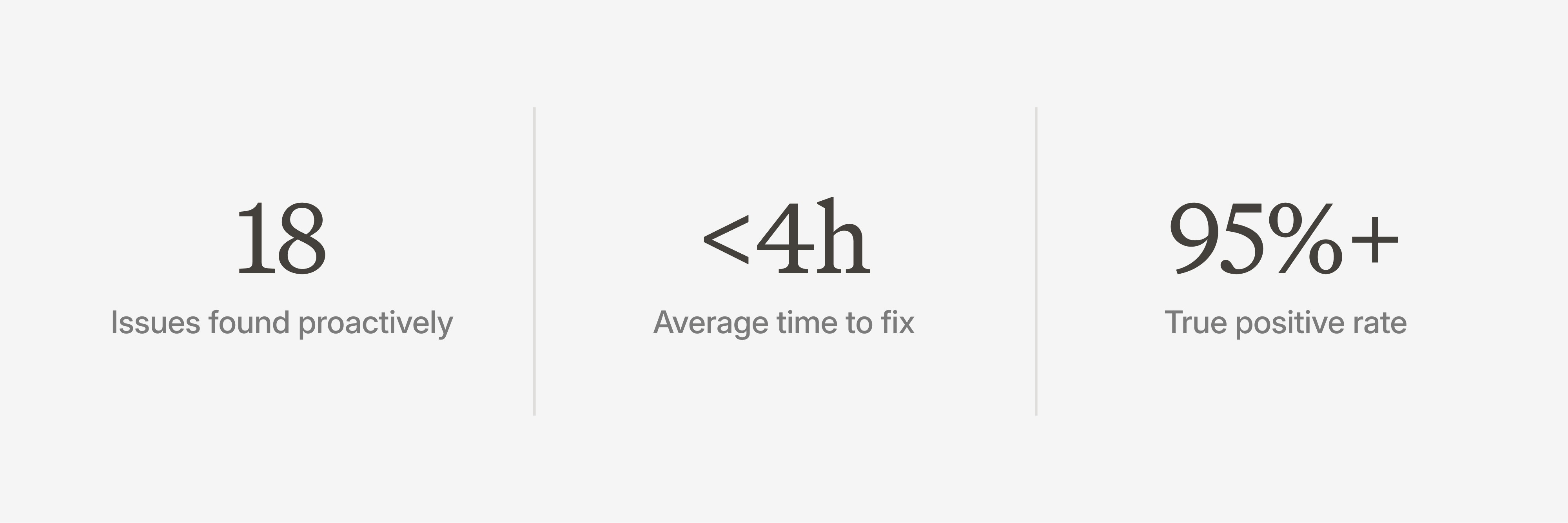

Every application has vulnerabilities. The difference is whether you find them first. One week after our most recent external penetration test wrapped up, Sisyphus found 18 additional exploitable issues in a single afternoon that the manual test hadn't reached. Most were multi-step chains that required correlating weaknesses across different layers of the stack, the kind of composite findings that are structurally difficult for time-boxed manual testing to surface. We were surprised by how diverse and sophisticated the findings were, spanning database, application, and middleware layers.

All 18 were found and fixed the same day. The mechanical fixes had PRs through CI and human review within hours. The complex ones had tickets with full exploitation context waiting for engineers before they left for the evening. That's the point: continuous testing compresses the window between vulnerability and fix from months to hours.

Every finding came with a full exploitation trace, a confidence score, and (for most categories) a working proof of concept. Each finding links to the full agent log: every request sent, every response analyzed, every decision the agent made during exploitation. We're not reading a summary. We're reading the pen tester's notebook.

Here's how a session flows end to end:

What we got wrong (and what we learned)

Pre-recon is everything. We initially underinvested in the recon phase. Once we gave the agent a real understanding of our application and codebase before it started testing, false positives dropped and exploitable chains increased substantially.

Our first confidence scores were too noisy. The initial version over-reported medium-confidence findings. We recalibrated by comparing Sisyphus's assessments against our security team's independent triage of the same findings. High-confidence findings now carry a >95% true positive rate.

We drew the auto-fix line in the wrong place at first. We tried auto-generating fixes for more complex issues. The boundary we landed on is simple: if the fix touches complex application logic, business rules, or data models, a human reviews it. If it's purely additive (adding a rate limit, adding validation, adding a header), it's safe to automate.

Continuous testing changed how our teams behave. This one we didn't predict. When vulnerabilities surface in hours instead of months, engineers fix them faster. Not because of escalation pressure, but because the context is fresh. The developer who wrote the endpoint yesterday fixes the vulnerability today, instead of a different developers reverse-engineering intent six months later.

Where we're taking this next

We're expanding Sisyphus into infrastructure-layer testing beyond the application surface, dependency and supply chain analysis feeding into the same finding pipeline, and cross-service attack chains that identify composite vulnerabilities invisible to single-service testing.

None of this replaces human review or our external penetration testing partners. Our pen testers found issues Sisyphus didn't, the kind of creative, logic-driven exploits that require human intuition. Sisyphus handles the breadth. They bring the depth. Both get better when the other exists.

The goal isn't to build the best pen testing tool. It's to make continuous security validation a default, as automatic and expected as CI running on every commit. Your test suite checks that your code works. Sisyphus checks that your code is safe.

A note on sharing

We're publishing this not as a product announcement but because the threat landscape is evolving faster than any single organization can track. The techniques described here will be used by many more attackers. Industry threat sharing, improved detection methods, and stronger defensive tooling are all critical. We intend to continue sharing what we learn, both about the threats the industry faces and the defenses we build.

We encourage security teams across financial services to experiment with applying AI for defense in areas like continuous pen testing, SOC automation, threat detection, and vulnerability assessment. One of the advantages of being an AI-native company is the ability to address AI-native threats. The cost of offensive AI is dropping. The organizations that invest in defensive AI now will be the ones best positioned for what's coming.

More posts